For memory and storage technologies, bandwidth can be increased through parallelism but the time to access the technology is relatively constant. That led to storage technology becoming the bottleneck in overall performance.

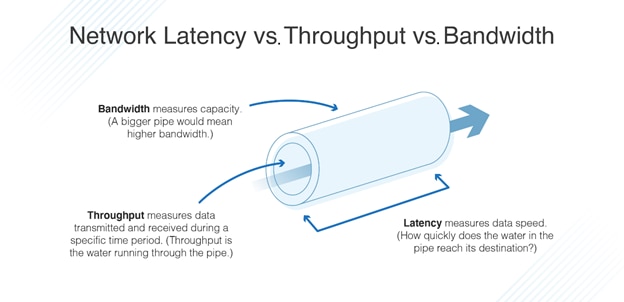

But as CPU processing times have dropped, the time to get data from HDDs -the drive latency-did not drop correspondingly. Those improvements have allowed processors to process more instructions, and therefore more data, in the same, or less time than previous generation processors. Transistors have steadily increased in number according to Moore’s law, 2 while multicore architectures have continued to evolve. 1 Patterson showed that bandwidth has historically improved at a much faster rate than latency. Building on a key study by David Patterson, Figure 1 adds SSD data points to Patterson’s “latency lags bandwidth” chart. To illustrate the march of technologies it is useful to compare relative bandwidth performance versus latency improvement over time for various storage media. Maintaining Latency and Bandwidth Balance as Technologies March Forward As CPU performance has increased over time, storage latency has not kept pace, becoming a drag on overall system performance gains. That’s because like the HDDs of 10 years ago, these SSDs require the processor to wait too long for data, adding latency that can hold back systems from achieving the performance levels that modern CPUs are capable of delivering. Now, even fast NAND SSDs are no longer adequate for today’s data-driven applications that need to access and process data in real time or near real time. As a result, NAND-based SSDs have been widely adopted in the market. The arrival of NAND solid state drives (SSDs) offered another place to store data, and speed access to more of the dataset. Accesses to high latency HDDs simply wasted too many processor cycles to wait for data. Ten years ago when the only other available data store in many systems was a slow hard disk drive (HDD), there was often little choice. Increases in dataset size can be addressed by increasing the amount of DRAM in the system, but that is prohibitively expensive. Memory and Storage – A (Very) Brief HistoryĭRAM is a very high bandwidth low latency data store, but relatively expensive per bit.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed